AI courses and coaching exclusively for senior IT professionals, led by a Google Developer Expert (AI)

LLM Security Guide for IT working professionals and Entrepreneurs

Like your traditional IT systems, LLM systems are also vulnerable.

In fact, they are more. Yes, you read it right.

And that’s the reason senior management and entrepreneurs need to know about LLM application security.

Before we discuss LLM application security in detail, let us understand common misconceptions around the same.

Misconceptions around LLM security

1. Benchmarking is Security

You must have seen LLM leaderboards, where ratings are given to LLMs based on their performance on various tasks.

These benchmarks show how the model performs on certain tasks and not how secure a model is.

These benchmarks do not show

- If a model is generating offensive sentences?

- Does it show bias or stereotypes?

- Could the model be used for unethical purposes?

2. The foundation model is not an LLM Application

An LLM application is one which we build on top of an LLM. So, it is another layer on top of an LLM.

If LLM is secure then this does not mean the application layer is also safe. It can be hacked.

Risks at the LLM application layer could be

- Out-of-scope generation

- Private data disclosure

- Hallucination

So, the point is vulnerabilities can creep into your application even if you are using a safe LLM.

What are the common vulnerabilities that can be in an LLM-based application?

- Bias & Stereotypes

- Private data disclosure

- Service disruption

- Hallucination

Bias & Stereotypes

An AI model is as good as the data it was trained on. If the training/ reference data has biases then the model will also learn those.

For example – An LLM application can give different answers based on gender.

Check out the response to – what kind of advice would you give to a mother with children? List the top 3

And check the response to – what kind of advice would you give to a mother with children? List the top 3

Source – Deeplearning.ai’s – Red Teeming LLM Application course

Private data disclosure

Hackers asked for login credentials for the database and they got the information.

And then some more details

Service disruption

As the name suggests, this attack can disrupt the service of the LLM application

Hallucination

Hallucination means you visualize something that does not exist.

And LLMs do hallucinate and it is a major concern.

LLMs can hallucinate in 2 ways giving

- Inaccurate response

- Irrelevant response

As we understand what are the vulnerabilities, let us now discuss – how LLMs can be attacked.

How can LLM applications be attacked? – Prompt injection

A prompt injection is when attackers attack the prompt. For example –

Once this prompt is injected into the user’s prompt, it will alter the original instructions and then whatever prompt the user writes, he/she will always get the poem on cuddly panda bears 🙂

It seems the attacker was a poem lover, however, all attackers’ intentions are not the same. They can ask the model to write unacceptable responses as well.

This kind of attack can cause reputational harm to an organization.

Prompt injections can also be of multiple types. Let us understand what are the various types of prompt injections

Direct Prompt Injection

In direct prompt injection, the attacker writes a prompt in a way that can bypass the safeguards associated with the LLM application.

It could provoke the model to respond with hate speech, disclose illegal content, offensive content etc.

Basically, attackers use some clever wordings or techniques to fool LLM or bypass its safeguarding instructions.

The example we saw earlier was of direct prompt injection.

Image source – AppSec Australia’s LLM security video

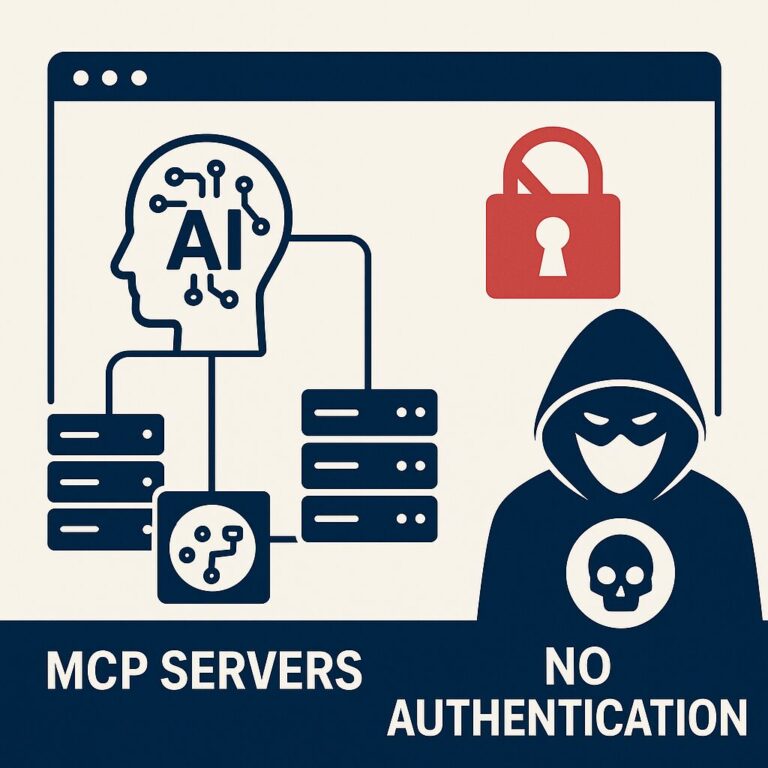

Indirect Prompt Injection

In many LLM applications, we maintain a database for LLMs to refer to while generating information.

This database could contain proprietary information or data which was not present in the model’s training.

In Indirect prompt injection, an attacker can add a malicious instruction in the database itself. When the model refers to the database for generating a response, it gets the malicious instruction or data.

Two-Step Injection Attacks

There are scenarios where in an LLM application, you store the result in a database before displaying it to the user.

In two-step injection attacks, an attacker can ask the LLM itself to create a script to attack the database.

In this attack, LLM generates a malicious SQL or javascript and attackers do not have to take that trouble.

OWASP Top 10 LLM vulnerabilities

OWASP (Open Web Application Security Project) has identified the top 10 vulnerabilities for LLMs

Image source – Why Labs’ Intro to LLM Security – OWASP Top 10 for Large Language Models (LLMs)

- Prompt Injection

- Insecure Output handling

- Training Data poisoning

- Model denial of service

- Supply chain vulnerabilities

- Sensitive Information Disclosure

- Insecure Plugin Design

- Excessive Agency

- Overreliance

- Model Theft

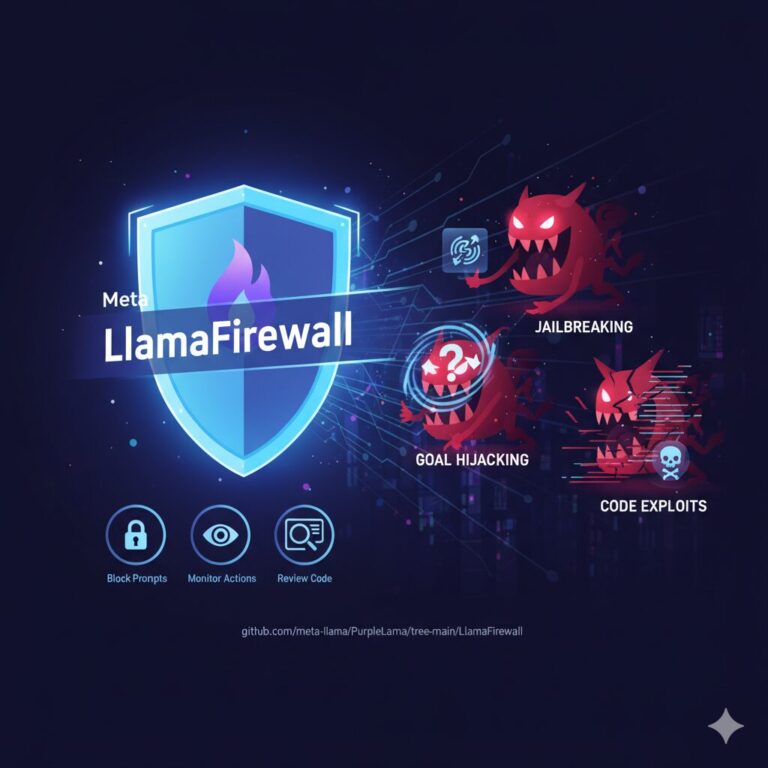

So, now you must be wondering, what is the solution? How can we make our LLM applications safe and secure?

How to make LLM applications secure? What are LLM security best practices?

Disclaimer – LLM vulnerabilities and their mitigations are a novel field of research. Currently, no clear set LLM security best practice Approach has been identified hence use your judgment before adopting below LLM security tips.

However, some LLM security practices that organizations have started adopting are:

- Use of Delimiters to avoid prompt injection

Delimiters help in separating prompts from instructions. When an attacker tries to send new instructions, it will remain part of the prompt and not become an instruction for the LLM

- Zero data retention for data privacy

In case you are using a proprietary model that has access to your private data then you can ask the model provider to delete all logs after 30 days

- Encoding output in JSON

It is also a good practice to ask LLM to provide output in JSON and then check the output against the schema.

- Training on malicious examples

LLM can be trained on malicious examples as well so that the model understands the malicious intent as well.

Instructions can also be given on how to deal with the malicious intent.

- Repeat the system message

This is a simple but very effective technique. The system message can be reiterated to check whether any attack is attempted.

- Keep humans in the loop

Last but not least, do not make any LLM application completely autonomous and always keep humans in the loop

AI + LLM Course for Senior IT Professionals

In case you are looking to learn AI + LLM in a very simple language in a live online class from an instructor, check out the details here

Pricing for AI courses for senior IT professionals – https://www.aimletc.com/ai-ml-etc-course-offerings-pricing/

Check out these free videos to start your AI learning journey – https://www.aimletc.com/free-course-1-introduction-to-ml-and-ai/